It describes the different components of Semantic Web applications, and their use: the eponymous Working Ontologist needs to understand how applications are structured in order to create useful, useable, models.

The components are

RDF Parsers and Serializers

Parsers translate text written in N-Triples, Turtle or RDF/XML into "triples in teh RDF data model".

Serializers do the reverse.

Incidentally, if you parse from a text file, and then reserialise (I have switched to using "s" instead of "z" here because I'm not quoting from the book and I'm English) you won't necessarily get an identical output file…

RDF Stores

Enhanced databases: as well as storing/sorting data, they also "merge information from multiple data sources".

RDF Query Engines

Enable the retrieval of information from an RDF store "according to structured queries".

For the uninitiated (ie: me), a query language is simply a programming language designed for retrieving information from databases. And, the RDF query language that W3C is standardising is called SPARQL. Which is the subject of chapter 5. But, tantalisingly, the authors reveal that it "includes a protocol for communicating queries and results so that a query engine can act as a web service". My guess is that means it enables human beings to read the results: hopefully when I read the next chapter I'll learn if my attempt to serialise that sentence was successful or not…

What it definitely means is that the results provide "another source of data for the semantic web."

Applications

"An application has some work that it performs with the data it processes … using some programming language that accesses the RDF store via queries (processed with the RDF query engine)."

I also perform some work with the data I process. That makes me almost, but not quite, an application.

Anyway, the authors list the following "typical RDF applications":

- calendar/map integration, enabling information from several different people's diaries/"points of interest gathered from different web sites" to be displayed in one place

- annotation, allowing a variety of users to tag information with keywords that have URIs

- content indexing of resources available in various places/stores

Most RDF systems also include a converter, which allows the system to access data – like spreadsheets, relational databases and HTML – that isn't stored in a serialised form, but is readily convertible into something that can be read by a parser.

Microformats & RDFa

Microformats are ways of tagging commonly used web-page items (eg: events, business cards), so that they can be used by RDF stores. The problem with them is that they need a controlled vocabulary and a dedicated parser.

RDFa is designed to get round that issue by using "the attribute tags in HTML to embed information that can be parsed into RDF. This is good because

- it's easier to extract the RDF data from pages marked up for this purpose

- it allows the content author "to express the intended meaning of a web pafe inside the web page itself. This ensures that the RDF data in the document matches the intended meaning of the document itself."

Nice to end a chapter with the sense that I actually understand something… Even though we're not in Kansas any more…

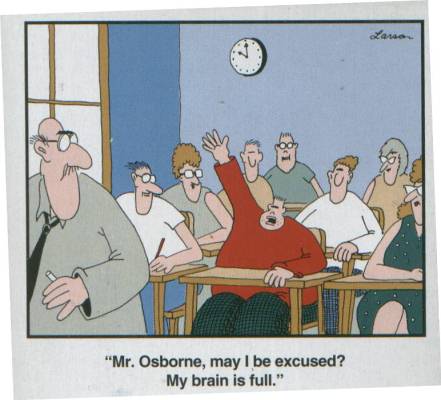

And - urk! Just noticed that chapter 5 is almost as long as chapters 1-4 put together. I may bneed to b

|

| How reading this book makes me feel. |